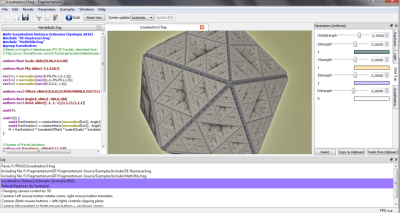

After having gained a bit of experience with GPU shader programming during my Fragmentarium development, a natural question to ask is: how fast are these GPU’s?

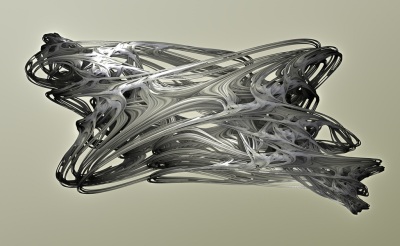

This is not an easy question to answer, and it depends on the specific application. But I will try to give an answer for the kind of systems that I’m interested in: pixel graphics systems, where each pixel can be calculated independently of the others, such as raytraced 3D fractals.

Lets take my desktop computer, a fairly standard desktop machine, as an example. It is equipped with Nvidia Geforce 9800GT GPU @ 1.5 GHz, and a Intel Core 2 Quad Q8200 @ 2.33GHz.

How many processing unit are there?

Number of processing units (CPU): 4 CPU cores

Number of processing units (GPU): 112 Shader units

Based on these numbers, we might expect the GPU to be a factor of 28x times faster than the CPU. Of course, this totally ignores the efficiency and operating speed of the processing units. Lets try looking at the processing power in terms of maximum number of floating-point operations per second instead:

Theoretical Peak Performance

Both Intel and Nvidia list the GFLOPS (billion floating point operations per second) rating for their products. Intel’s list can be found here, and Nvidia’s here. For my system, I found the following numbers:

Performance (CPU): 37.3 GFLOPS

Performance (GPU): 504 GFLOPS

Based on these numbers, we might expect the GPU to be a factor of 14x times faster than the CPU. But what do these numbers really mean, and can they be compared? It turns out that these number are obtained by multiplying the processor frequency by the maximum number of instructions per clock cycle.

For the CPU, we have four cores. Now, when Intel calculate their numbers, they do it based on the special 128-bit SSE registers on every modern Pentium derived CPU. These extensions make it possible to handle two double precision floating point, or four single precision floating point numbers per clock cycle. And in fact there exists a special instruction – the MAD, or Multiply-Add, instruction – which allows for two arithmetic operations per clock cycle on each element in the SSE registers. These means Intel assume 4 (cores) x 2 (double precision floats) x 2 (MAD instructions) = 16 instructions per clock cycle. This gives the theoretical peak performance stated above:

Performance (CPU): 2.33 GHz * 4 * 2 * 2 = 37.3 GFLOPS (double precision floats)

What about the GPU? Here we have 112 independent processing units. On the GPU architecture an even more benchmarking-friendly instruction exists: the MAD+MUL which combines two multiplies and one addition in a single clock cycle. This means Nvidia assumes 112 (cores) * 3 (MAD+MUL instructions) = 336 instructions per clock cycle. Combining this with a stated processing frequency of 1.5 GHz, we arrive at the number stated earlier:

Performance (GPU): 1.5 GHz * 112 * 3 = 504 GFLOPS (single precision floats)

But wait… Nvidia’s number are for single precision floats – the Geforce 8800GT does not even support double precision floats. So for a fair comparison we should double Intel’s number, since the SSE extensions allows four simultaneous single precision numbers to be processed instead of two double precision floats. This way we get:

Performance (CPU): 2.33 GHz * 4 * 4 * 2 = 74.6 GFLOPS (single precision floats)

Now, using this as a guideline, we would expect my GPU to be a factor of 6.8x faster than my CPU. But we have some pretty big assumptions here: for instance, not many CPU programmers would write SSE-optimized code – and is a modern C++ compiler powerful enough to automatically take advantage of them anyway? And how often is the GPU able to use the three operation MUL+MAD instruction?

A real-world experiment

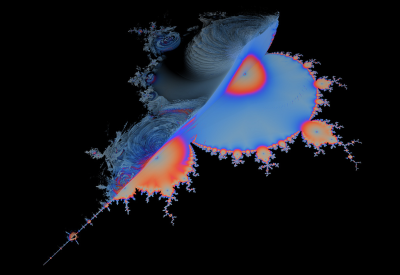

To find out I wrote a simple 2D Mandelbrot system and benchmarked it on the CPU and GPU. This is really the kind of computational tasks that I’m interested in: it is trivial to parallelize and is not memory-intensive, and the majority of executed code will be floating point arithmetics. I did not try to optimize the C++ code, because I wanted to see if the compiler was able to perform some SSE optimization for me. Here are the execution times:

13941 ms – CPU single precision (x87)

13941 ms – CPU double precision (x87)

10535 ms – CPU single precision (SSE)

11367 ms – CPU double precision (SSE)

424 ms – GPU single precision

(These numbers have some caveats – I did perform the tests multiple times and discarded the first few runs, but the CPU code was only single-threaded – so I assumed the numbers would scale perfectly and divided the execution times by four. Also, I verified by checking the generated assembly code, that SSE instructions indeed were used for the core Mandelbrot loop, when they were enabled.).

There are a couple of things to notice here: first, there is no difference between single and double precision on the CPU. This is as could be expected for the x87 compiled code (since the x87 defaults to 80-bit precision anyway), but for the SSE version, we would expect a double up in speed. As can be seen, the SSE code is really not very much more efficient the the x87 code – which strongly suggests that the compiler (here Visual Studio C++ 2008) is not very good at optimizing for SSE.

So for this example we got a factor of 25x speedup by using the GPU instead of the CPU.

“Measured” GFLOPS

Another questions is how this example compares to the theoretical peak performance. By using Nvidia’s Cg SDK I was able to get the GPU assembly code. Since I now could count the number of instruction in the main loop, and I knew how many iterations were performed, I was able to calculate the actual number of floating point operations per second:

GPU: 211 (Mandel)GFLOPS

CPU: 8.4 (Mandel)GFLOPS*

(*The CPU number was obtained by assuming the number of instructions in the core loop was the same as for the GPU: in reality, the CPU disassembly showed that the simple loop was partially unrolled to more than 200 lines of very complex assembly code.)

Compared to the theoretical maximum numbers of 504 GFLOPS and 74.6 GFLOPS respectively, this shows the GPU is much closer to its theoretical limit than the CPU.

GPU Caps Viewer – OpenCL

A second test was performed using the GPU Caps Viewer. This particular application includes a 4D Quaternion Julia fractal demo in OpenCL. This is interesting since OpenCL is a heterogeneous platform – it can be compiled to both CPU and GPU. And since Intel just released an alpha version of their OpenCL SDK, I could compare it to Nvidia’s SDK.

The results were interesting:

Intel OpenCL: ~5 fps

Nvidia OpenCL: ~35 fps

(The FPS did vary through the animation, so these numbers are not very accurate. There were no dedicated benchmark mode.)

This suggest that Intel’s OpenCL compiler is actually able to take advantage of the SSE instructions and provides a highly optimized output. Either that, or Nvidia’s OpenCL implementation is not very efficient (which is not likely).

The OpenCL based benchmark showed my GPU to be approximately 7x times faster than my CPU. Which is exactly the same as predicted by comparing the theoretical GFLOPS values (for single precision).

Conclusion

For normal code written in a high-level language like C or GLSL (multithreaded, single precision, and without explicit SSE instructions) the computational power is roughly equivalent to the number of cores or shader units. For my system this makes the GPU a factor of 25x faster.

Even though the CPU cores have higher operating frequency and in principle could execute more instructions via their SSE registers, this does not seem be fully utilized (and in fact, compiling with and without SSE optimization did not make a significant difference, even for this very simple example).

The OpenCL example tells another story: here the measured performance was proportional to the theoretical GFLOPS ratings. This is interesting since this indicate, that OpenCL could also be interesting for CPU-applications.

One thing to bear in mind is, that the examples tested here (the Mandelbrot and 4D Quaternion Julia) are very well-suited for GPU execution. For more complex code, with conditional branching, double precision floating point operations, and non-coalesced memory access, the CPU is much more efficient than the GPU. So for a desktop computer such as mine, a factor of 25x is probably the best you can hope for (and it is indeed a very impressive speedup for any kind of code).

It is also important to remember that GPU’s are not magical devices. They perform operations with a theoretical peak performance typically 5-15 times larger than a CPU. So whenever you see these 1000x speed up claims (e.g. some of the CUDA showcases), it is probably just an indication of a poor CPU implementation.

But even though the performance of GPU’s may be somewhat exaggerated you can still get a tremendous speedup. And GPU interfaces such as GLSL shaders are really simple to use: you do not need to deal explicitly with threads, you have built-in vectors and matrices, and you can compile GLSL code dynamically, during run-time. All features which makes GPU programming nearly ideal for exploring pixel graphic systems.